Local LLM Hub

takeshy3k downloads

takeshy3k downloadsChat with local LLMs (Ollama, LM Studio) with local embeddings RAG, file encryption, edit history, slash commands, and workflow automation.

- Overview

- Scorecard

- Updates34

Your company's security policy blocks cloud APIs. But you refuse to give up AI-powered note automation.

Local LLM Hub brings the full power of Gemini Helper's workflow automation, RAG, MCP integration, and agent skills to a completely local environment. Ollama, LM Studio, vLLM, or AnythingLLM — your data never leaves your machine.

Why Local?

Every byte stays on your machine. No API keys sent to the cloud. No vault contents uploaded anywhere. This isn't a privacy "option" — it's the architecture.

| What | Where it stays |

|---|---|

| Chat history | Markdown files in your vault |

| RAG index | Local embeddings in workspace folder |

| LLM requests | localhost only (Ollama / LM Studio / vLLM / AnythingLLM) |

| MCP servers | Local child processes via stdio |

| Encrypted files | Encrypted/decrypted locally |

| Edit history | In-memory (cleared on restart) |

If you use Gemini Helper at home but need something for work — this is it. Same workflow engine, same UX, zero cloud dependency.

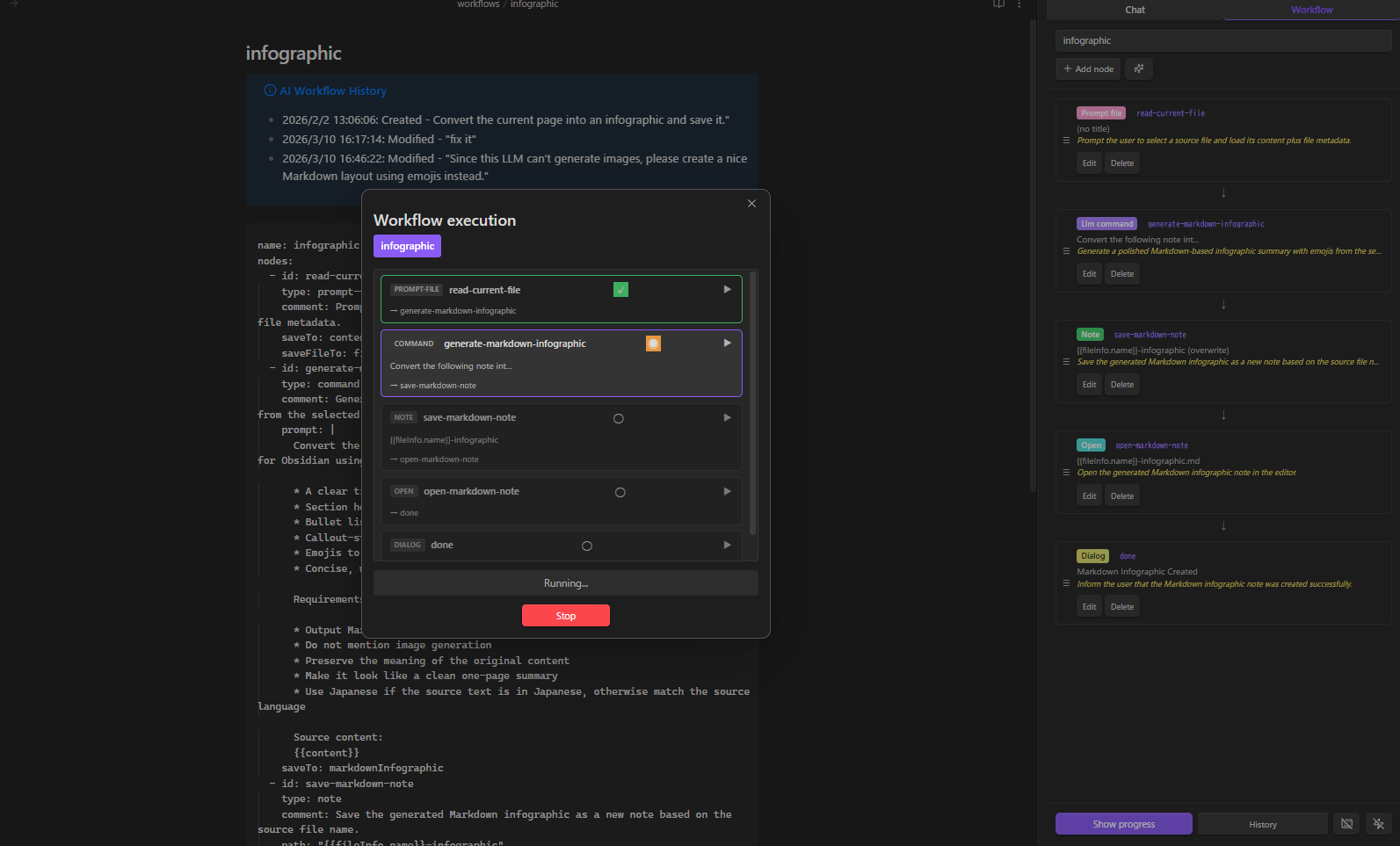

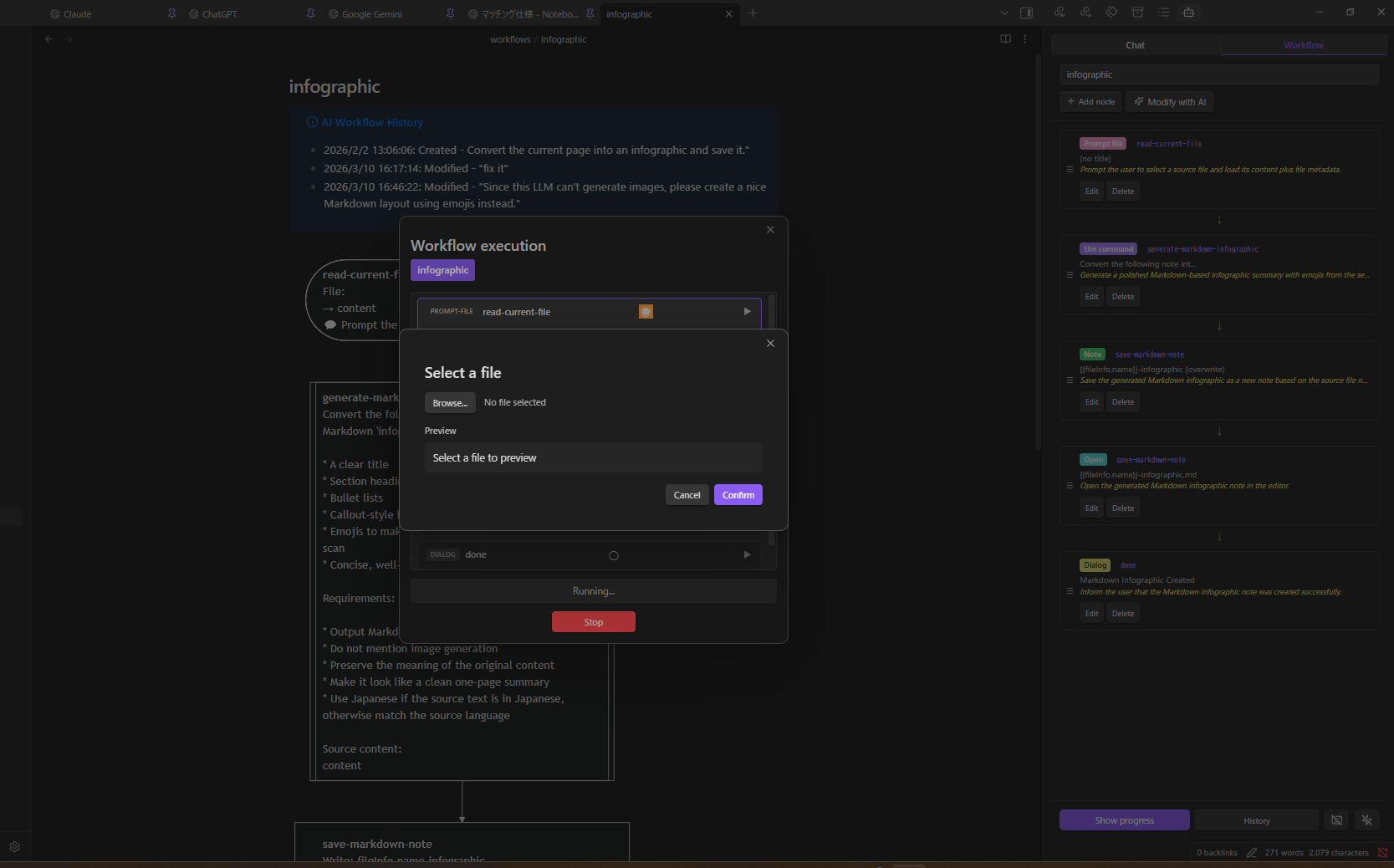

Workflow Automation — The Core Feature

Describe what you want in plain language. The AI builds the workflow. No YAML knowledge required.

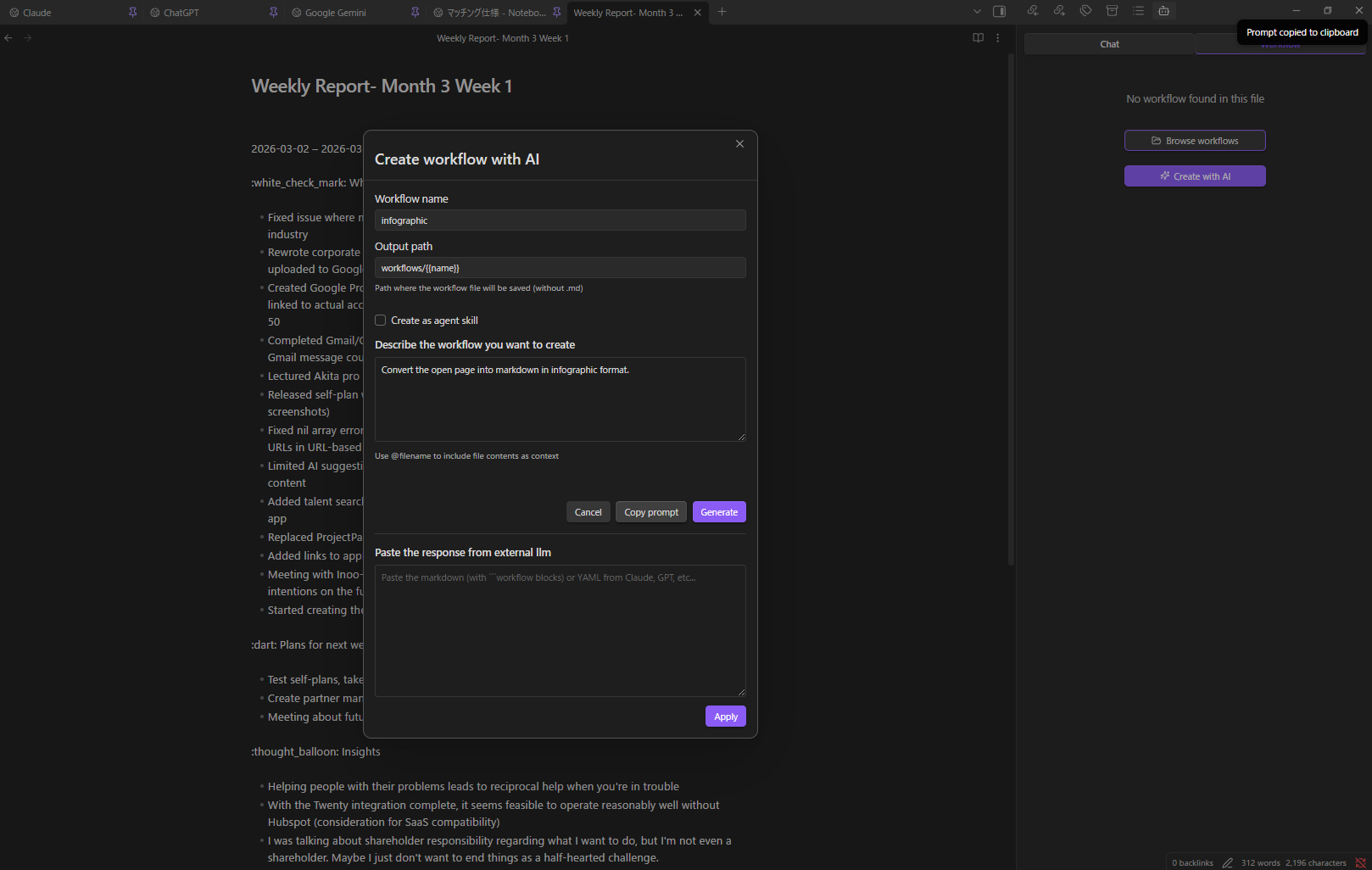

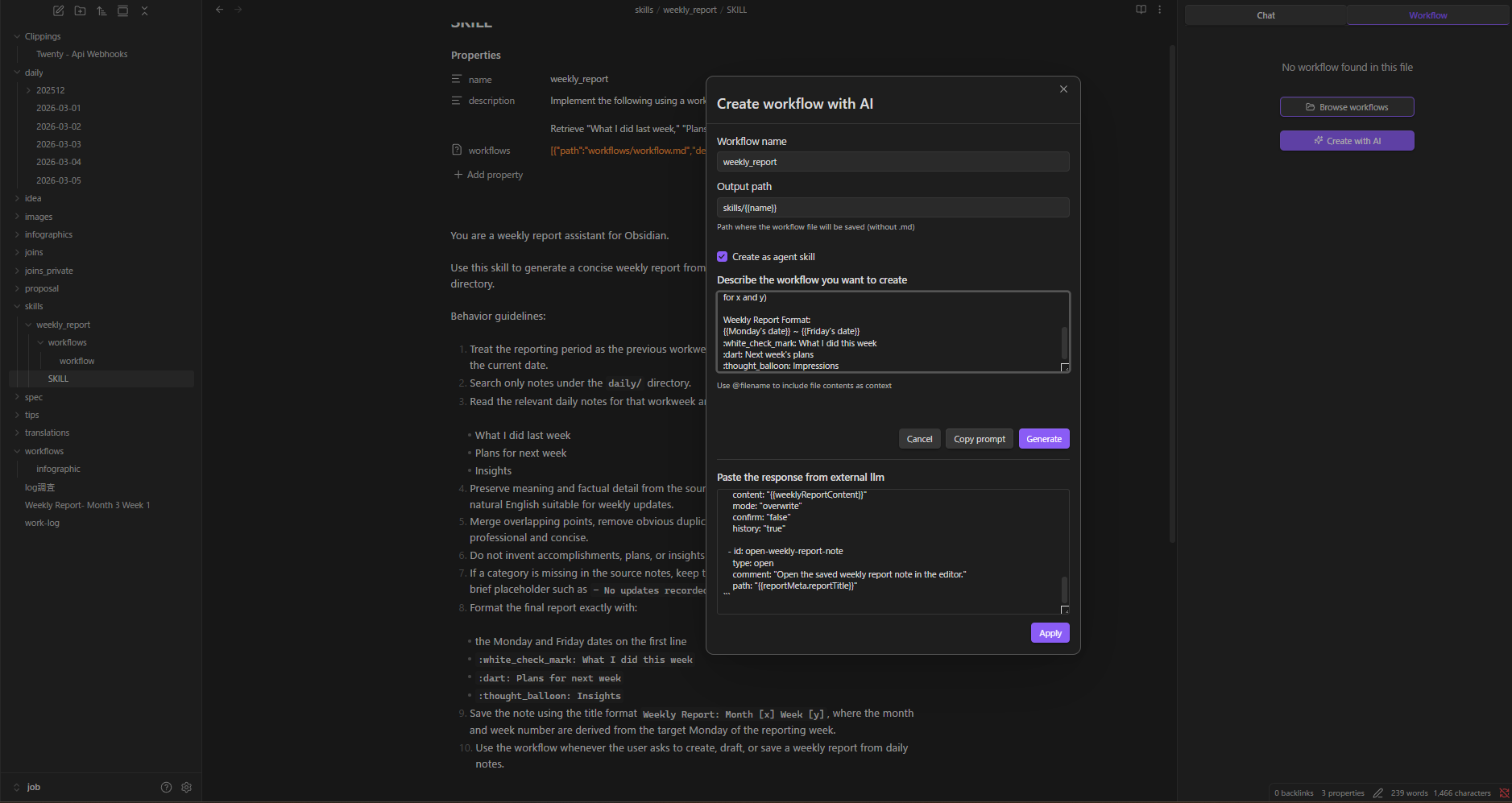

Create Workflows & Skills with AI

- Open the Workflow / skill tab

- Click Create workflow with AI (or Create skill with AI for an agent skill)

- Describe: "Convert the current page into an infographic and save it"

- Click Generate

- The AI produces a plain-language plan first — review it and click OK to proceed, Re-plan to give feedback and regenerate the plan, or Cancel to abort

- After generation, the AI runs a review over the result. If issues are found you can OK (with a confirmation prompt), Refine (regenerate using the review feedback), or Cancel. Clean reviews proceed automatically

- If the LLM produces invalid YAML, the plugin automatically re-prompts it with the parse error (up to 2 retries) before surfacing a recoverable failure view with the raw output

- The workflow is saved once you accept the final preview

Don't have a powerful local model? Click Copy Prompt, paste into Claude/GPT/Gemini, paste the response back, and click Apply.

Create workflow / skill from any file:

When opening the Workflow / skill tab with a file that has no workflow code block, separate Create workflow with AI and Create skill with AI buttons are displayed. The header of an active SKILL.md also exposes Create skill with AI alongside Modify skill with AI so you can spin up a new skill without leaving the panel.

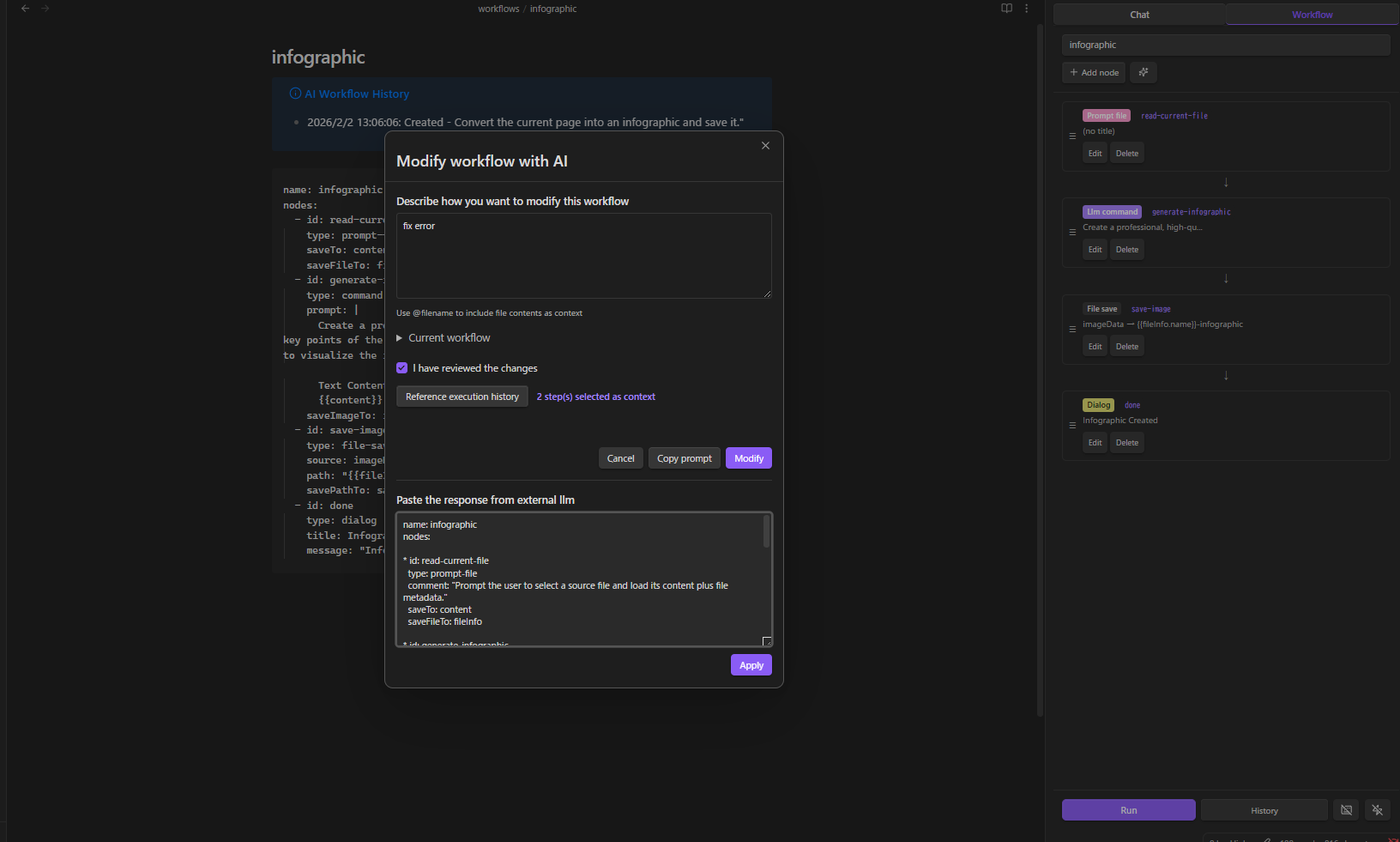

Modify with AI

Load any workflow, click AI Modify, describe the change. The same plan → generate → review flow runs. You can Refine the review result as many times as you want; each Refine triggers a new generation pass and a fresh review so the review always matches the final YAML. Reference execution history to debug failures.

Modify Skill with AI: When the active file is a SKILL.md, the Workflow / skill tab shows a Modify skill with AI button. It updates the SKILL.md instructions body and the referenced workflow file in a single pass, preserving the skill's frontmatter (name, description, workflow entries).

Visual Node Editor

23 node types across 12 categories:

| Category | Nodes |

|---|---|

| Variables | variable, set |

| Control | if, while |

| LLM | command |

| Data | http, json |

| Notes | note, note-read, note-search, note-list, folder-list, open |

| Files | file-explorer, file-save |

| Prompts | prompt-file, prompt-selection, dialog |

| Composition | workflow (sub-workflows) |

| RAG | rag-sync |

| Script | script (sandboxed JavaScript) |

| External | obsidian-command |

| Utility | sleep |

Event Triggers & Hotkeys

- Event triggers — auto-run workflows on file create / modify / delete / rename / open

- Hotkey support — assign keyboard shortcuts to any named workflow

- Execution history — review past runs with step-by-step details

See WORKFLOW_NODES.md for the complete node reference.

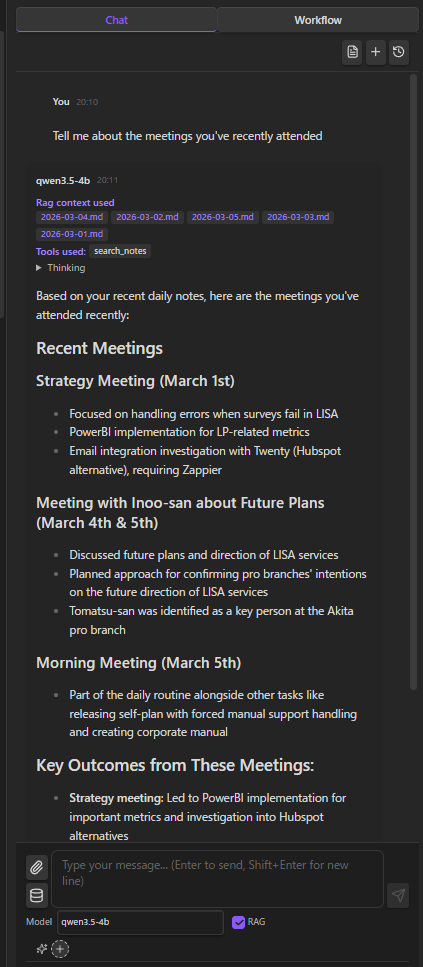

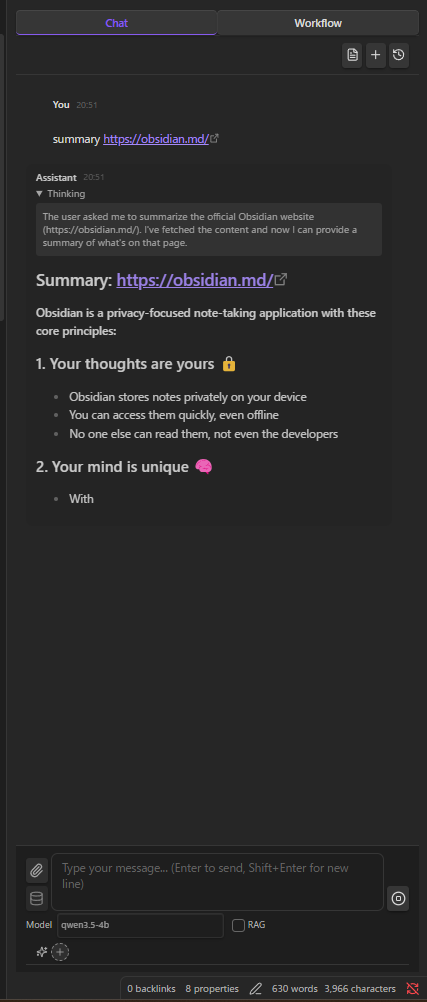

AI Chat

Streaming chat with your local LLM. Thinking display, file attachments, @ mentions for vault notes, multiple sessions.

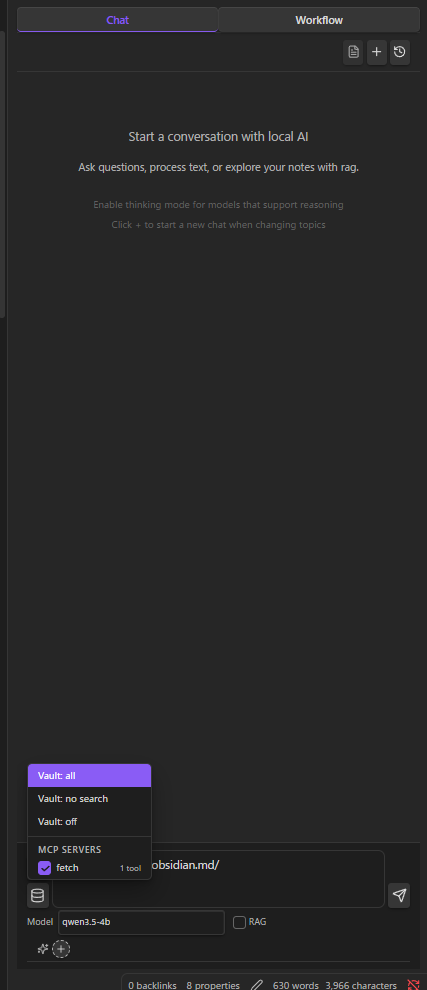

Vault Tools (Function Calling)

Models with function calling support (Qwen, Llama 3.1+, Mistral) can directly interact with your vault:

read_note · create_note · update_note · rename_note · create_folder · search_notes · list_notes · list_folders · get_active_note · propose_edit · execute_javascript

Three modes — All, No Search, Off — selectable from the input area.

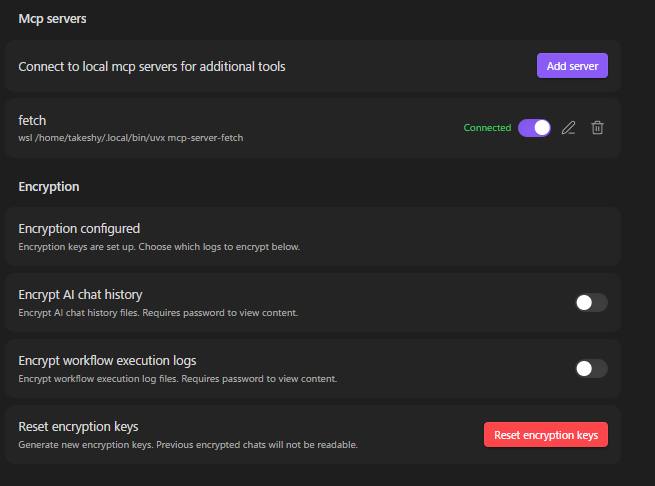

MCP Servers

Connect local MCP servers to extend the AI with external tools. MCP tools are merged with vault tools and routed via function calling — all running as local child processes.

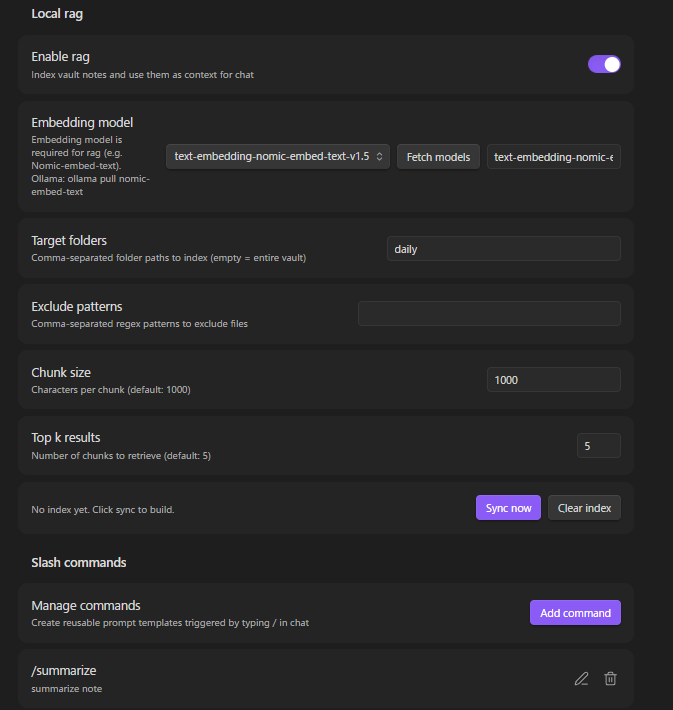

RAG (Local Embeddings)

Index your vault with a local embedding model (e.g. nomic-embed-text). Relevant notes and PDFs are automatically included as context. PDF text is extracted via PDF.js and chunked alongside Markdown files. Everything computed and stored locally.

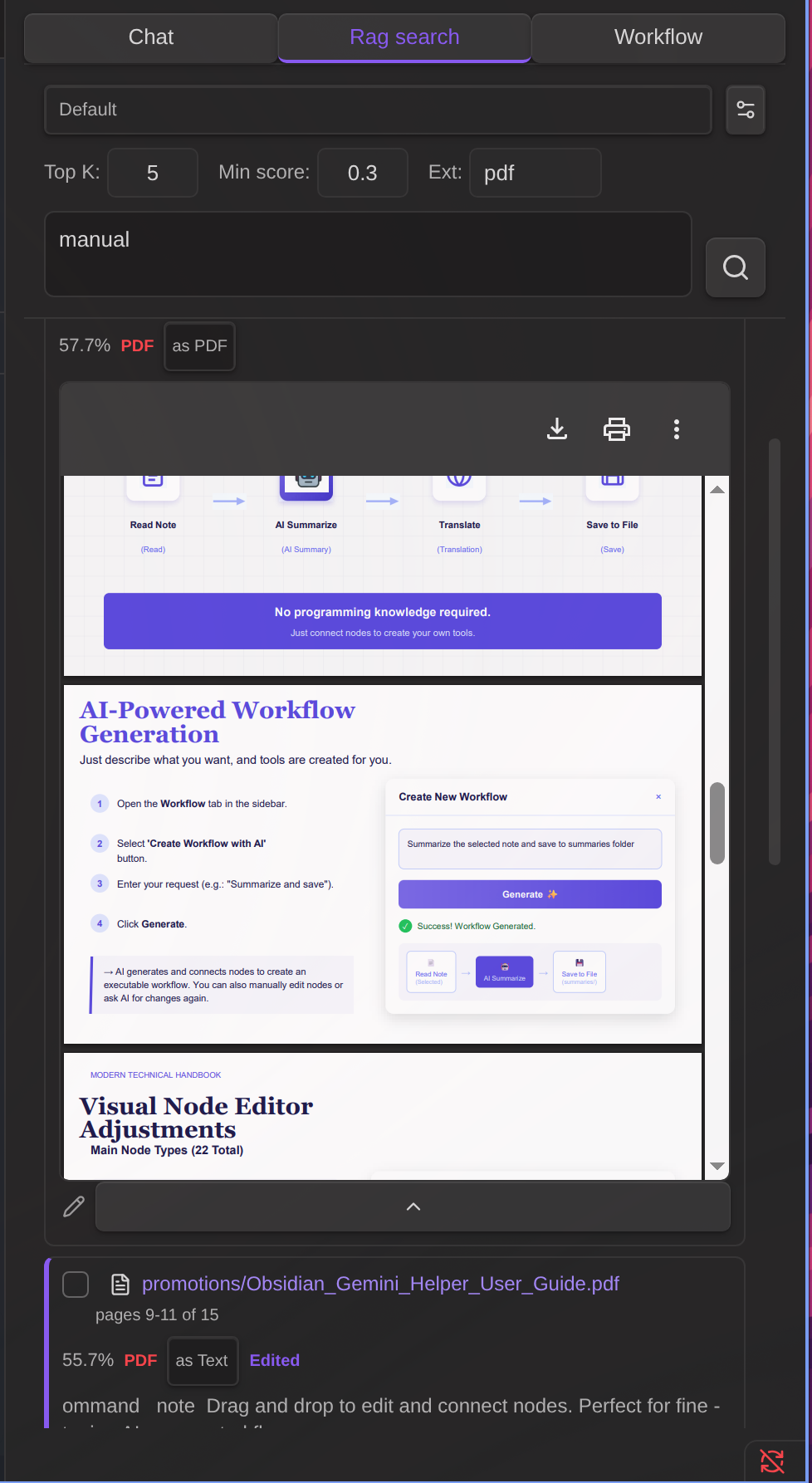

RAG Search

A dedicated search interface for semantic vector search with keyword filtering, chunk editing, and AI-powered refinement.

- Keyword filter — Narrow semantic search results by text or file path

- Chunk editor — Edit result text, load adjacent chunks with automatic overlap removal

- AI refine — Automatically expand context and clean up text using your local LLM

See RAG_SEARCH.md for details.

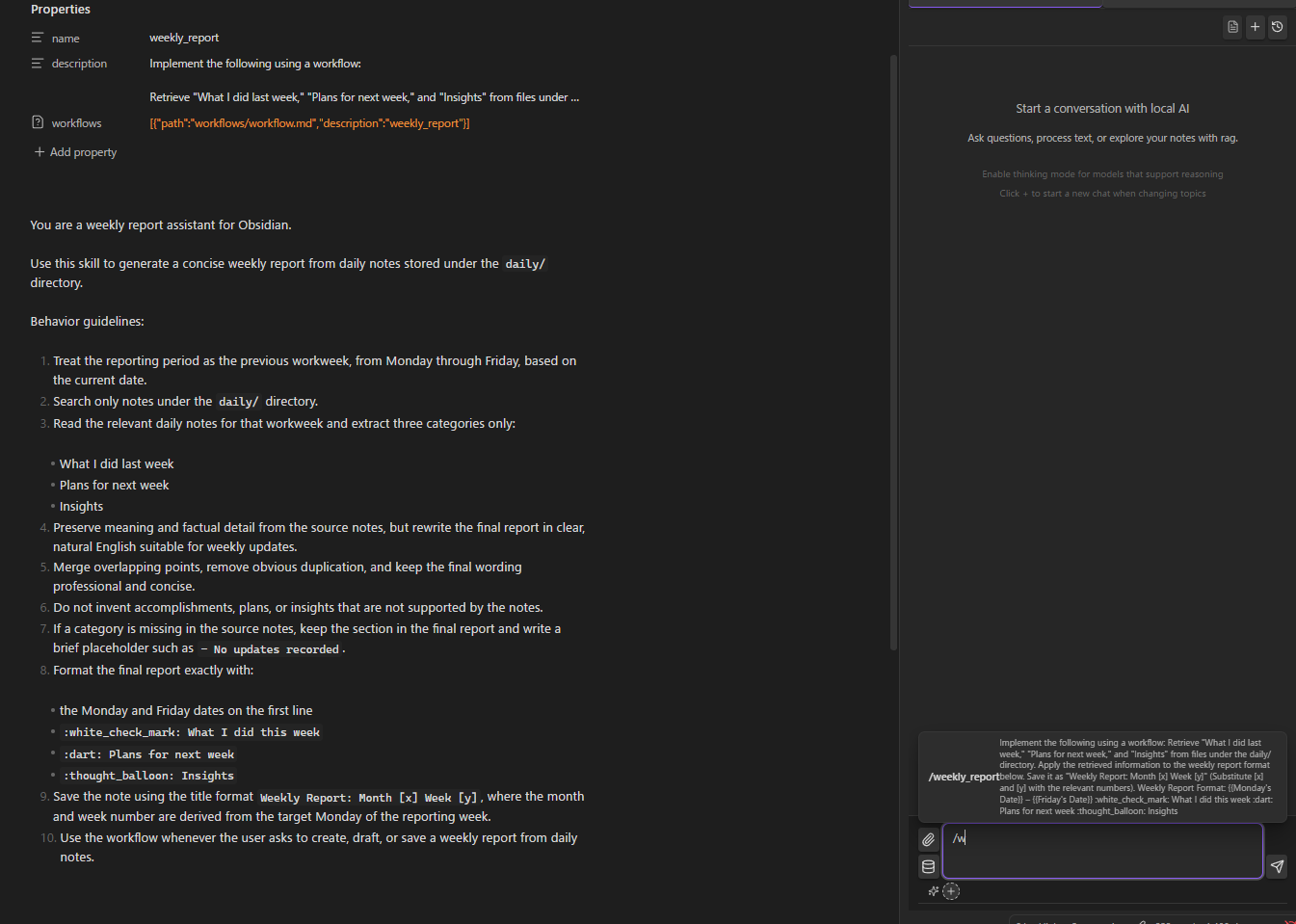

Agent Skills

Inject reusable instructions into the system prompt via SKILL.md files. Activate per conversation. Skills can also expose workflows that the AI can invoke as tools during chat.

Create skills the same way as workflows — click Create skill with AI in the Workflow / skill tab and describe what you want. The AI generates both the SKILL.md instructions and the workflow. To edit an existing skill, open its SKILL.md and click Modify skill with AI in the Workflow / skill tab — the AI updates both the instructions body and the referenced workflow together.

Clickable skill chips: Active skill chips in the chat input area and on assistant messages are clickable and jump to the matching SKILL.md (built-in skills are shown as static labels).

Workflow error recovery: If a skill workflow fails during a chat, the failing tool call shows an Open workflow button. Clicking it opens the workflow file and switches to the Workflow / skill tab so you can immediately edit and re-run. Use Modify workflow with AI together with Reference execution history to let the AI fix the failing step.

See SKILLS.md for details.

Slash Commands & Compact History

- Custom prompt templates triggered by

/ /compactto compress long conversations while preserving context

File Encryption

Password-protect sensitive notes. Encrypted files are invisible to AI chat tools but accessible to workflows with password prompt — ideal for storing API keys or credentials.

Edit History

Automatic tracking of AI-made changes with diff view and one-click restore.

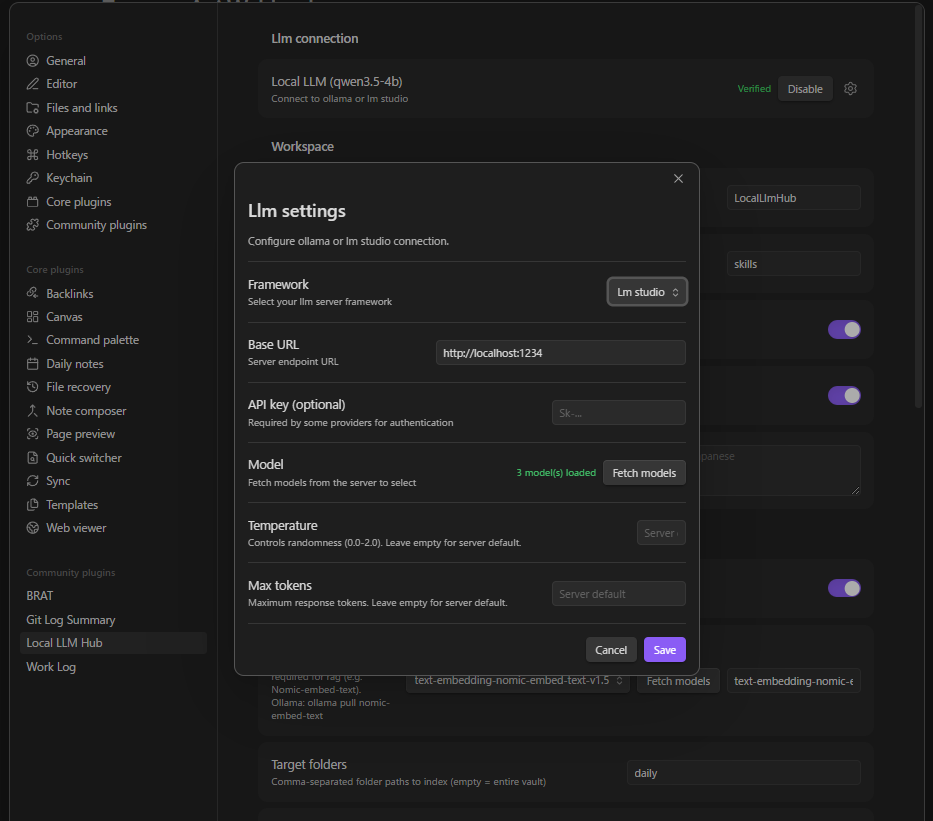

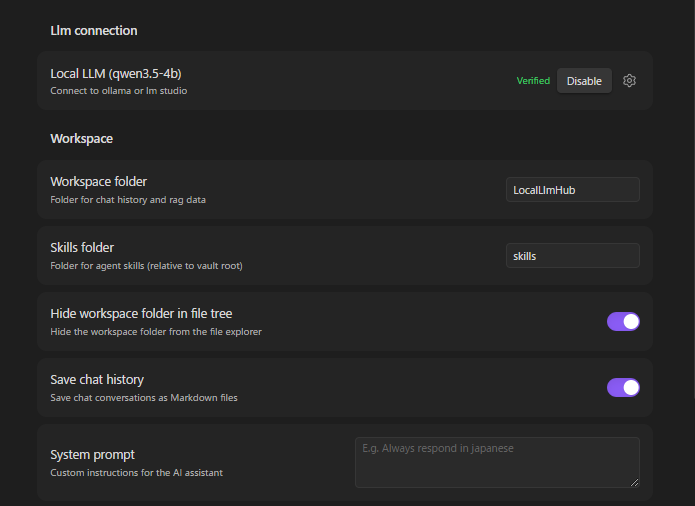

Setup

Requirements

- Ollama, LM Studio, vLLM, or AnythingLLM

- A chat model (e.g.

ollama pull qwen3.5:4b) - For RAG: an embedding model (e.g.

ollama pull nomic-embed-text)

Quick Start

- Install and start your LLM server

- Open plugin settings → select framework (Ollama / LM Studio / vLLM / AnythingLLM)

- Set the server URL (defaults pre-filled)

- Fetch and select your chat model

- Click Verify connection

RAG Setup

- Enable RAG in settings

- Fetch and select the embedding model

- Configure target folders (optional — defaults to entire vault)

- Click Sync to build the index

MCP Server Setup

- Settings → MCP servers → Add server

- Configure: name, command (e.g.

npx), arguments, optional env vars - Toggle on — connects automatically via stdio

Workspace Settings

Supported Frameworks

| Framework | Chat Endpoint | Streaming | Thinking | Function Calling |

|---|---|---|---|---|

| Ollama | /api/chat (native) |

Real-time | message.thinking field |

tools parameter |

| LM Studio (OpenAI compatible) | /v1/chat/completions |

SSE | <think> tags |

tools parameter |

| vLLM | /v1/chat/completions |

SSE | <think> tags |

tools parameter |

| AnythingLLM | /v1/openai/chat/completions |

SSE | <think> tags |

tools parameter |

Using Cloud LLMs (OpenAI, Gemini, etc.)

The "LM Studio (OpenAI compatible)" framework works with any OpenAI-compatible API endpoint, including cloud services:

| Service | Base URL | API Key |

|---|---|---|

| OpenAI | https://api.openai.com |

Your OpenAI API key |

| Google Gemini | https://generativelanguage.googleapis.com/v1beta/openai |

Your Gemini API key |

RAG with cloud LLMs: Cloud LLMs cannot use local embedding models directly. To use RAG, configure the Embedding server URL in RAG settings to point to a local Ollama instance (e.g. http://localhost:11434) and select an embedding model like nomic-embed-text.

Installation

BRAT (Recommended)

- Install BRAT plugin

- Open BRAT settings → "Add Beta plugin"

- Enter:

https://github.com/takeshy/obsidian-local-llm-hub - Enable the plugin in Community plugins settings

Manual

- Download

main.js,manifest.json,styles.cssfrom releases - Create

local-llm-hubfolder in.obsidian/plugins/ - Copy files and enable in Obsidian settings

From Source

git clone https://github.com/takeshy/obsidian-local-llm-hub

cd obsidian-local-llm-hub

npm install

npm run build

Gemini Helper との関係 / Relationship to Gemini Helper

This plugin is the local-only sibling of obsidian-gemini-helper. Same workflow engine, same UX patterns, but designed for environments where cloud APIs are not an option.

| Gemini Helper | Local LLM Hub | |

|---|---|---|

| LLM Backend | Google Gemini API / CLI | Ollama / LM Studio / vLLM / AnythingLLM / OpenAI-compatible APIs |

| Data destination | Google servers | localhost only |

| Workflow engine | ✅ | ✅ (same architecture) |

| RAG | Google File Search | Local embeddings |

| MCP | ✅ | ✅ (stdio only) |

| Agent Skills | ✅ | ✅ |

| Image generation | ✅ (Gemini) | — |

| Web search | ✅ (Google) | — |

| Cost | Free / Pay-per-use | Free forever (your hardware) |

Choose Gemini Helper when you want cutting-edge cloud models. Choose Local LLM Hub when privacy is non-negotiable.